|

12/30/2023 0 Comments Redshift unload to s3 parquet

Whatever the credentials you configure is the environment for the file to be uploaded. UNLOAD uses the MPP capabilities of your Amazon Redshift cluster and is faster than retrieving a large amount of data to the client side. You can unload data into Amazon Simple Storage Service (Amazon S3) either using CSV or Parquet format. You need to have AWS CLI configured to make this code work. If you’re fetching a large amount of data, using UNLOAD is recommended. % (schema ,table ,s3_bucket_nameschema ,table ,aws_access_key_id ,\Įxample 3: Upload files into S3 with Boto3 MANIFEST GZIP ALLOWOVERWRITE Commit """ \ UNLOAD command is also recommended when you need to retrieve large result sets from your data warehouse. Sql = """UNLOAD ('select * from %s.%s') TO 's3://%s/%s/%s.csv' \Ĭredentials 'aws_access_key_id=%s aws_secret_access_key=%s' \ With the UNLOAD command, you can export a query result set in text, JSON, or Apache Parquet file format to Amazon S3. format (dbname ,port, user ,password ,host_url ) '''This method will unload redshift table into S3'''Ĭonn_string = "dbname=''"\ Federated Query to be able, from a Redshift cluster, to. You need to install boto3 and psycopg2 (which enables you to connect to Redshift). Today, we are launching two new features to help you improve the way you manage your data warehouse and integrate with a data lake: Data Lake Export to unload data from a Redshift cluster to S3 in Apache Parquet format, an efficient open columnar storage format optimized for analytics. The code examples are all written 2.7, but they all work with 3.x, too. For example, if you want to deploy a Python script in an EC2 instance or EMR through Data Pipeline to leverage their serverless archtechture, it is faster and easier to run code in 2.7.

When it comes to AWS, I highly recommend to use Python 2.7. I usually encourage people to use Python 3.

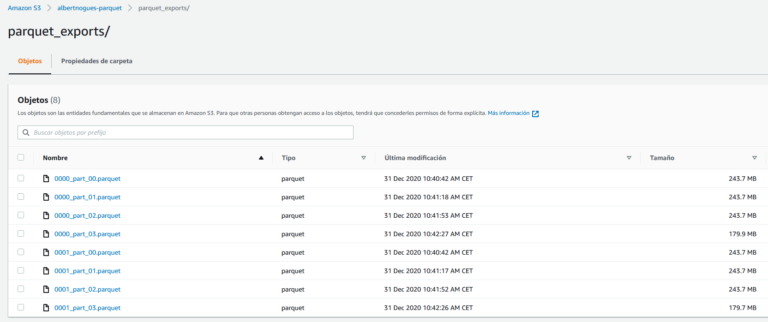

Boto3 (AWS SDK for Python) enables you to upload file into S3 from a server or local computer. You can also unload data from Redshift to S3 by calling an unload command. You can upload data into Redshift from both flat files and json files. / Questions / Redshift UNLOAD parquet file size Redshift UNLOAD parquet file size 0 My customer has a 2 - 4 nodes of dc2.8 xlarge Redshift cluster and they want to export data to parquet in the optimal size (1GB) per file with option (MAXFILESIZE AS 1GB). The best way to load data to Redshift is to go via S3 by calling a copy command because of its ease and speed.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed